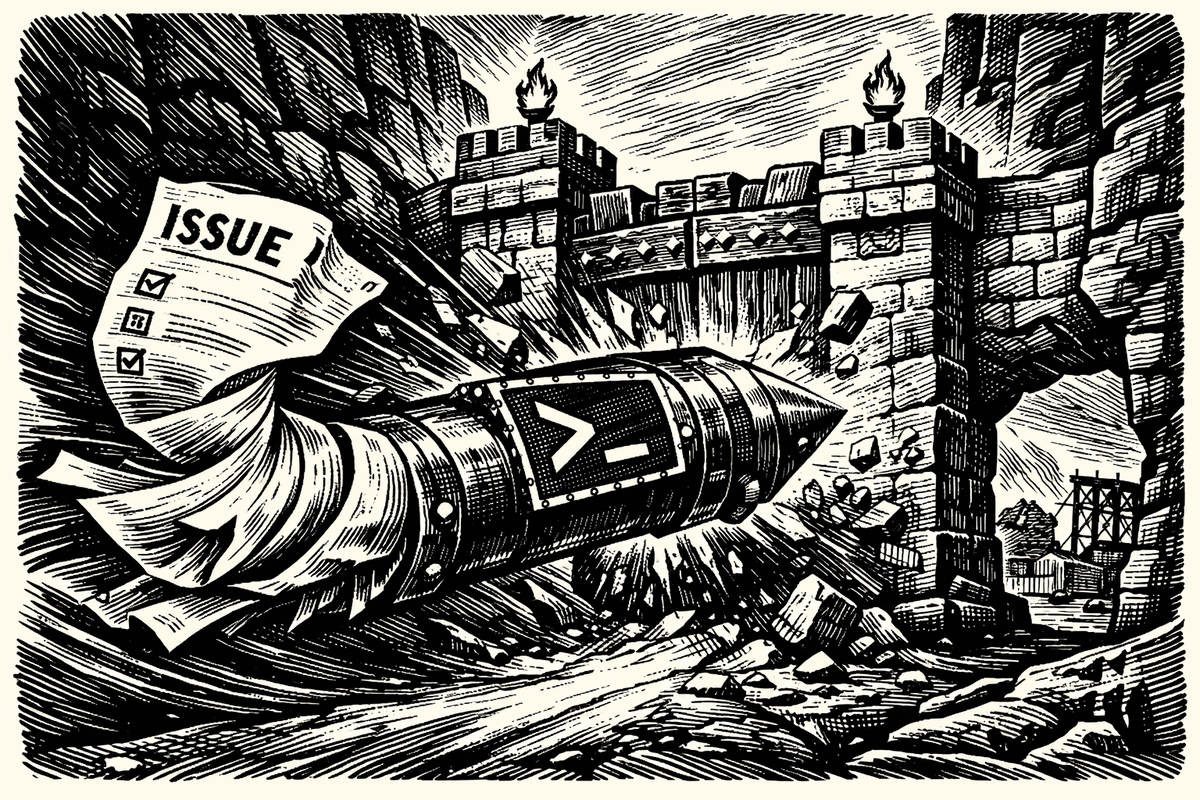

An attacker put untrusted text into a GitHub issue, an AI workflow turned that text into shell execution inside CI, the runner poisoned shared cache state, a later privileged publish job restored it, and Cline ended up with an unauthorized npm release. That is the chain that matters.

It is tempting to call this a prompt injection story and stop there. But prompt injection is only the opening move. The useful lesson is simpler: an agent that reads untrusted text should not be able to turn that text into shell commands inside your CI pipeline.

As Adnan Khan's writeup shows, Cline had an AI issue-triage workflow that any GitHub user could trigger. That workflow had access to tools including Bash, ran in default-branch context, and provided a path from attacker-controlled issue content to real code execution on the runner. From there, the attack moved through GitHub Actions cache poisoning into more privileged nightly publishing workflows and ultimately into an unauthorized [email protected] npm publish. Cline's own post-mortem describes the incident as a combination of prompt injection, cache poisoning, and credential theft.

The issue title was the trigger. The shell was the problem.

Why this became a supply-chain incident

The triage workflow itself was not the destination. The pivot mattered more than the prompt.

GitHub has been explicit that workflows running in default-branch context can poison the Actions cache and move laterally into more privileged workflows, even when the first workflow does not itself hold the secret you care about. That warning maps directly onto what happened here. The issue-triage workflow provided code execution. The cache became the bridge. The nightly publish jobs restored poisoned state. The publish token was exposed. Then [email protected] was published with a modified package.json that added a postinstall script to install openclaw@latest. (GitHub's guidance covers this class of workflow risk directly.)

That is why this incident matters beyond Cline. It shows how fast "the model followed the wrong instruction" becomes "your release pipeline did."

The agent proposes. The policy decides.

The pipeline needed fixing too

There is a shallow version of this argument that says the answer is just runtime enforcement and nothing else. That would be wrong.

There were ordinary CI/CD fixes that mattered here too: do not let untrusted issue-triggered workflows run in a context that can influence release jobs, scope workflow permissions aggressively, avoid static publish credentials where OIDC or narrower-lived mechanisms exist, and treat cache sharing across trust boundaries as dangerous by default. Those are real controls, and teams should use them. GitHub's own guidance says as much.

But those controls still leave the underlying question untouched:

Why was untrusted text allowed to reach a shell at all?

That is where runtime enforcement belongs.

What AgentSH would have stopped

AgentSH would not need to interpret the issue title better than the model. It would need to stop the first unauthorized side effect.

In an issue-triage workflow, the allowed behavior is narrow: read the issue, inspect repository context, and maybe write a triage artifact or comment. That is it.

So when the model tries to turn attacker-controlled issue text into npm install, that process spawn should be denied.

When it tries to reach an attacker-controlled host, that network connection should be denied.

When it tries to write into cache-relevant paths or mutate state that later workflows will restore, that file write should be denied.

When the job does not need a publish token, that token should not be present in the environment in the first place. And if it is present, it should be stripped or locked away from subprocesses.

This is not about making the model more obedient. It is about making the machine less gullible.

The exploit only works if the runtime lets instruction following become real process, file, and network activity. Block those transitions and the attack collapses at the first step that matters.

What that policy boundary looks like

Of course CI pipelines need to run npm install. Build jobs need package managers, test jobs need runtimes, publish jobs need credentials. That is not the issue.

The issue is that an AI agent running inside CI -- one whose input was an attacker-controlled issue title -- had the same execution authority as the build and publish steps around it. The triage agent did not need a general-purpose shell. It needed a task-shaped execution envelope.

version: "1"

processes:

default: deny

allow:

- /usr/bin/git

- /usr/bin/grep

- /bin/cat

- /bin/ls

files:

read:

allow:

- /workspace/**

write:

allow:

- /workspace/.triage-output/**

- /tmp/**

deny:

- ~/.npm/**

- ~/.cache/**

- /github/**

- /workspace/.git/**

network:

default: deny

allow:

- api.github.com:443

env:

strip:

- NPM_TOKEN

- NODE_AUTH_TOKEN

- VSCE_PAT

- OVSX_PATNo package managers. No general-purpose shells. No outbound network except the GitHub API. No publish credentials in the environment. The rest of the pipeline runs normally -- this policy scopes only the agent step, because that is the step where untrusted input meets execution.

This is not a prompt defense. It is an execution boundary.

The bigger lesson

People will read this incident and say the culprit was prompt injection. That is true, but incomplete.

Others will say the real culprit was GitHub Actions cache poisoning. That is also true, but incomplete.

The deeper culprit was ambient authority: untrusted text reached an agent, the agent had a shell, the shell sat inside CI, and CI sat on the path to release. Prompt injection lit the match. Cache poisoning spread the fire. But the fuel was a runtime with too much freedom.

Runtime enforcement does not fix the model. It fixes the blast radius.

← All postsBuilt by Canyon Road

We build Beacon and AgentSH to give security teams runtime control over AI tools and agents, whether supervised on endpoints or running unsupervised at scale. Policy enforced at the point of execution, not the prompt.

Contact Us →