The LiteLLM compromise is a useful case study in why supply chain defense is so hard.

This was not a typo-squatted package or a fake repo. A real package in a real ecosystem was compromised, malicious versions were published, and installation itself was enough to trigger malicious behavior. The attack was part of a broader campaign by TeamPCP that also hit Aqua Security's Trivy scanner and Checkmarx's KICS GitHub Actions -- three separate projects compromised using credentials stolen from CI/CD pipelines.

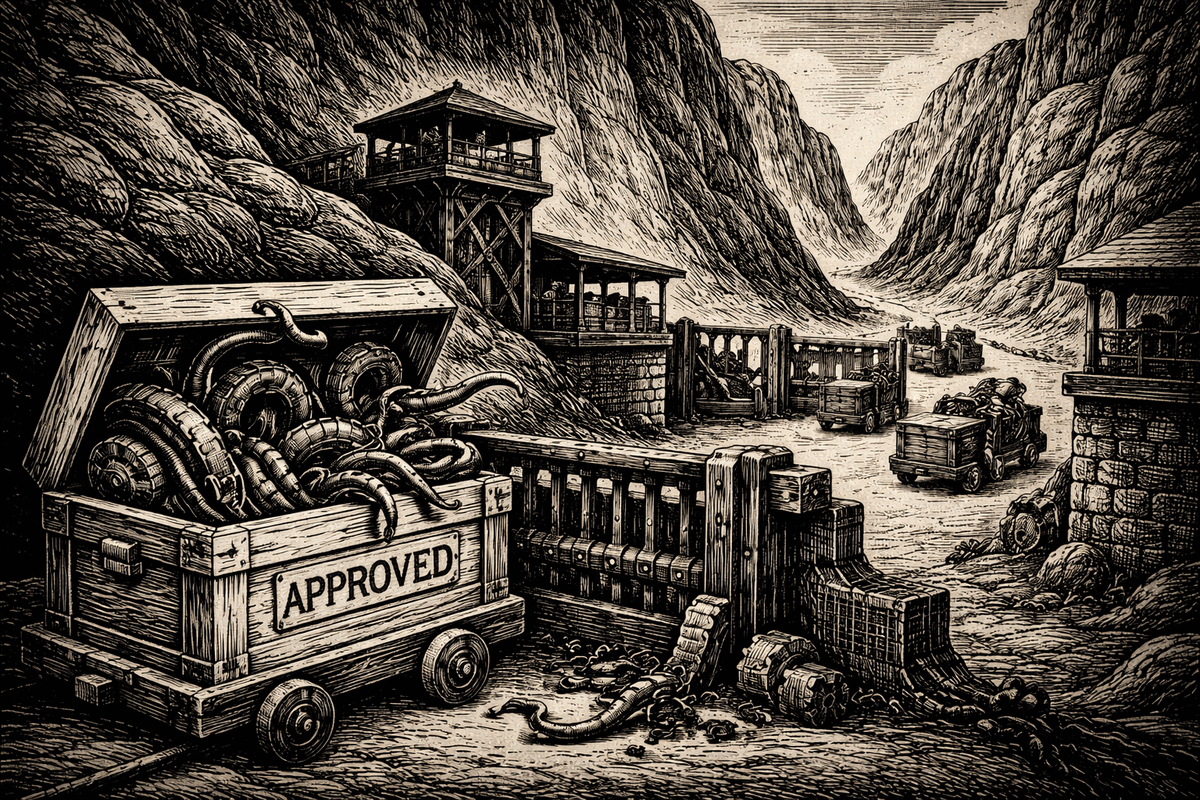

That is the hard part about modern supply chain attacks. By the time the code reaches your machine, it is already wearing a trusted costume.

The lesson is not that upstream controls failed and therefore do not matter. They do matter. Version pinning matters. Provenance matters. Trusted publishing matters. Narrow CI permissions matter.

The lesson is that none of those controls answer the question that matters most once a bad package lands in a real environment:

What happens if one gets in anyway?

What happened in LiteLLM matters beyond LiteLLM

The public writeups show the pattern clearly.

A compromised package version was published to PyPI. One of the malicious versions used a .pth startup hook so the payload would run automatically on Python startup. The payload harvested secrets, queried cloud metadata endpoints, and if it found a Kubernetes service account token, attempted to read cluster secrets and create privileged pods for persistence. In at least one case, the attack was discovered because the malware accidentally caused a fork bomb and froze the machine.

That was lucky. The rest of the attack design was not.

The important thing is not just what this payload did. It is how normal the triggering action was.

A tool installed a dependency. An MCP-related workflow pulled it transitively. A developer machine or CI environment did what it normally does every day.

That is exactly where AI agents make this problem worse.

Agents multiply the blast radius of supply chain risk

Humans install packages. Agents will install more of them, more often, with less hesitation, across more environments.

That is not a criticism of agents. It is just what happens when you give software a loop and a goal.

An agent trying to complete a task will often do things like:

- install a missing package

- upgrade a dependency to satisfy a version constraint

- pull in an MCP server or tool wrapper

Those are useful behaviors. They are also supply chain expansion behaviors.

An agent does not need to be socially engineered into making those moves. It will make them because they are often the shortest path to completing the task.

So the old supply chain problem gets a new accelerant:

more installs, more transitive dependencies, more ephemeral environments, more automated retries, more package managers, more chances for a poisoned dependency to land somewhere sensitive.

And once the package is inside the environment, the agent can make the outcome worse by continuing to operate normally while the payload reads secrets, connects out, or plants persistence.

The real question is not whether the model intended harm. The real question is what the process was allowed to do once the package executed.

Why supply chain defense needs an execution layer

A supply chain compromise is upstream. The damage happens downstream.

That distinction matters.

No runtime control on your machine can prevent an attacker from stealing a maintainer credential, publishing a poisoned wheel, or compromising a release workflow upstream. That has to be fixed at the registry, maintainer, CI, and provenance layers.

But once the malicious package lands on a developer box, CI runner, container, or agent sandbox, it still has to do concrete things in order to hurt you:

- read secrets or credentials

- spawn processes

- open outbound network connections

- write files for persistence

- exfiltrate what it found

Those are not abstract security concepts. Those are operating system side effects.

That is where AgentSH helps.

AgentSH sits under the agent or tool runtime and governs file, network, and process activity at execution time. It does not need the model to realize something is malicious. It does not need the package manager to warn in time. It does not need the prompt to say the right thing.

It evaluates what the process is actually trying to do.

That changes the outcome.

What AgentSH could have done in an incident like LiteLLM

AgentSH would not have prevented the LiteLLM publisher compromise itself. It would not have stopped the bad package from existing on PyPI.

What it could have done is much more practical:

1. Block secret harvesting

If the package tries to read ~/.ssh, cloud credentials, .env files, kubeconfig, service account tokens, Terraform state, or other sensitive material outside the workspace, those reads can be denied or gated.

A package can only steal what it can actually open.

2. Block exfiltration

If the payload tries to connect to an unknown domain, a raw IP, cloud metadata, internal networks, or the Kubernetes API, those outbound connections can be blocked, redirected, or forced through an approved proxy.

A package can only exfiltrate what it can actually send.

3. Block process explosions and runaway subprocess behavior

In the LiteLLM case, one of the malicious versions used a .pth startup hook that spawned a child Python process, which then re-triggered the same .pth on interpreter startup and caused an exponential fork bomb. That specific failure mode was a bug in the malware, but it is exactly the kind of thing runtime controls should catch early.

With execution-layer policy, you can limit which child processes may be spawned during installation, alert on suspicious subprocess recursion, and kill or deny runaway behavior before it turns a compromised install into a machine-wide outage.

A package can only turn into a process storm if it is allowed to keep spawning.

4. Block persistence

If the payload tries to drop files into startup paths, systemd user directories, shell init files, or other persistence locations, those writes can be denied.

A package can only persist if it can actually write where persistence lives.

5. Preserve the audit trail

This is the part many teams miss.

When people talk about containment, they often imagine losing visibility. In practice, good runtime enforcement gives you more usable evidence, not less.

Instead of finding out later that a package might have done something, you can record that it attempted to:

- open

~/.aws/credentials - read

~/.kube/config - connect to an unapproved domain

- write

~/.config/systemd/user/... - spawn a suspicious child process during install

That is far more actionable than a vague IOC list after the fact.

And it matters even more with agents, because agents create long, busy, high-churn execution traces. If you do not have runtime logs with policy decisions attached, incident response becomes guesswork.

Example policy ideas that would have helped

These examples are illustrative, not product syntax. The point is the control model.

Default: workspace-only file access

files:

default: deny

allow:

- /workspace/**

- /tmp/**

- /var/tmp/**

deny:

- ~/.ssh/**

- ~/.aws/**

- ~/.config/gcloud/**

- ~/.azure/**

- ~/.kube/**

- ~/.terraform.d/**

- **/.env

- **/.env.*

- **/*.pem

- **/*.keyThis alone changes a lot. Most agent tasks do not need unrestricted access to a developer's home directory.

Egress only to approved destinations

network:

default: deny

allow_domains:

- api.openai.com

- api.anthropic.com

- github.com

- pypi.org

- files.pythonhosted.org

- registry.npmjs.org

deny:

- 169.254.169.254

- 10.0.0.0/8

- 172.16.0.0/12

- 192.168.0.0/16

- kubernetes.default.svcA compromised package should not be able to call home to arbitrary infrastructure just because the environment has outbound Internet access.

No writes outside the workspace except approved temp paths

This example is about write control. The earlier file policy is about reads. In practice, you usually want both, because a package that cannot read secrets can still try to plant persistence if it can write freely.

writes:

default: deny

allow:

- /workspace/**

- /tmp/**

- /var/tmp/**

deny:

- ~/.config/**

- ~/.local/share/**

- ~/.bashrc

- ~/.zshrc

- ~/.profile

- ~/.config/systemd/**

- /etc/**That is how you cut off persistence without breaking normal agent work.

Process controls for install-time behavior

process:

allow:

- python

- python3

- pip

- uv

- git

- node

- npm

deny:

- curl

- wget

- nc

- ssh

- socat

limits:

max_child_processes: 20

max_spawn_rate_per_minute: 30

alert_on_spawn:

- python child processes launched from package install hooks

- recursive python startup chains

- shell execution during dependency installationYou do not have to ban package installation. You do need visibility when installation starts doing things that look nothing like installation, including recursive child-process storms.

Approval gate for unusually broad dependency actions

approvals:

require_for:

- install or upgrade packages outside approved lockfile flow

- first-time network access to new domains

- reading secrets outside declared task scopeThis is especially useful for agentic workflows. The agent can keep working, but crossing a higher-risk boundary triggers a human approval path outside the agent.

The practical takeaway

You should absolutely keep improving the front of the funnel:

- pin versions

- use lockfiles

- prefer trusted publishing and provenance

- reduce CI token scope

- verify artifacts against source

- quarantine suspicious releases quickly

But do not stop there.

Assume some bad package will eventually get in somewhere. Maybe through a direct install. Maybe through a transitive dependency. Maybe because an agent decided to install what looked like the right thing to finish a task.

When that happens, what matters is whether the code can actually do anything dangerous.

That is the role of the execution layer.

At Canyon Road, that is exactly the problem we are focused on with AgentSH.

Not replacing upstream supply chain security.

Making it far less likely that one bad package turns into stolen credentials, silent persistence, and a long night of forensics.

And doing it while retaining the audit trail you will wish you had once the incident starts.

Because in the agent era, "it only installed a package" is going to be the beginning of a lot more incident reports.

References

LiteLLM incident

- Security Update: Suspected Supply Chain Incident -- LiteLLM's official disclosure

- Supply Chain Attack in litellm 1.82.8 on PyPI -- FutureSearch technical analysis including

.pthhook mechanism and fork bomb details - Compromised litellm PyPI Package Delivers Multi-Stage Credential Stealer -- Sonatype's payload analysis

- Three's a Crowd: TeamPCP Trojanizes LiteLLM in Continuation of Campaign -- Wiz analysis of the LiteLLM compromise and its connection to the broader TeamPCP campaign

- How a Poisoned Security Scanner Became the Key to Backdooring LiteLLM -- Snyk's writeup on the credential chain from Trivy to LiteLLM

- LiteLLM Compromised on PyPI: Tracing the March 2026 TeamPCP Supply Chain Campaign -- Datadog Security Labs' end-to-end campaign analysis

- The Library That Holds All Your AI Keys Was Just Backdoored -- ARMO's Kubernetes-focused analysis of the persistence mechanism

- Popular LiteLLM PyPI Package Backdoored to Steal Credentials, Auth Tokens -- BleepingComputer coverage

- Malicious PyPI Package -- LiteLLM Supply Chain Compromise -- Truesec's technical breakdown

- CRITICAL: Malicious litellm_init.pth in litellm 1.82.8 -- Original GitHub issue with community triage

Related TeamPCP campaign

- Trivy Compromised by TeamPCP -- Wiz analysis of the initial Trivy compromise that started the chain

- KICS GitHub Action Compromised: TeamPCP Strikes Again -- Wiz analysis of the Checkmarx KICS compromise

- TeamPCP Supply Chain Attack Campaign Targets Trivy, Checkmarx (KICS), and LiteLLM -- Arctic Wolf's campaign overview

- Detecting, Investigating, and Defending Against the Trivy Supply Chain Compromise -- Microsoft's detection and response guidance

- TeamPCP Software Supply Chain Attack Spreads -- ReversingLabs' campaign tracking

- Trojanization of Trivy, Checkmarx, and LiteLLM Solutions -- Kaspersky's overview of the full attack chain

Built by Canyon Road

We build Beacon and AgentSH to give security teams runtime control over AI tools and agents, whether supervised on endpoints or running unsupervised at scale. Policy enforced at the point of execution, not the prompt.

Contact Us →